Multi-Task Learning

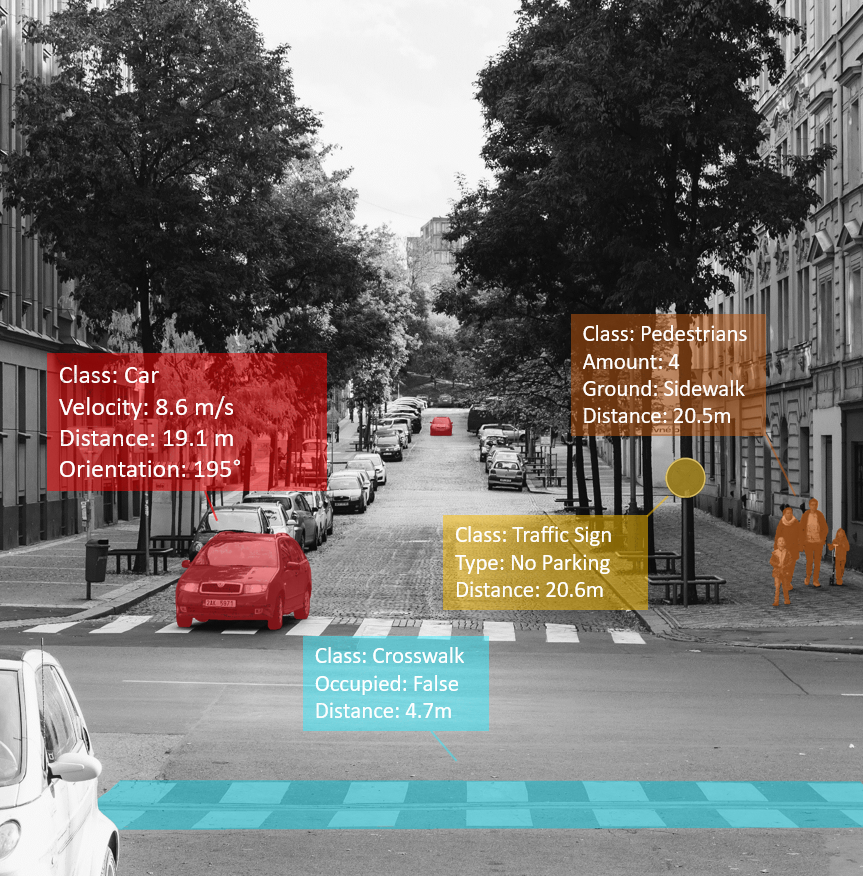

Mit steigender Autonomie von Fahrzeugen werden immer mehr und genauere Systeme zur intelligenten Umfeldwahrnehmung benötigt. Da klassische Bildverarbeitungsalgorithmen in ihren Möglichkeiten und ihrer Genauigkeit begrenzt sind, wird vermehrt auf Deep Learning basierende Systeme gesetzt, welche wiederum die heutige Hardware an ihre Grenzen stoßen lässt.

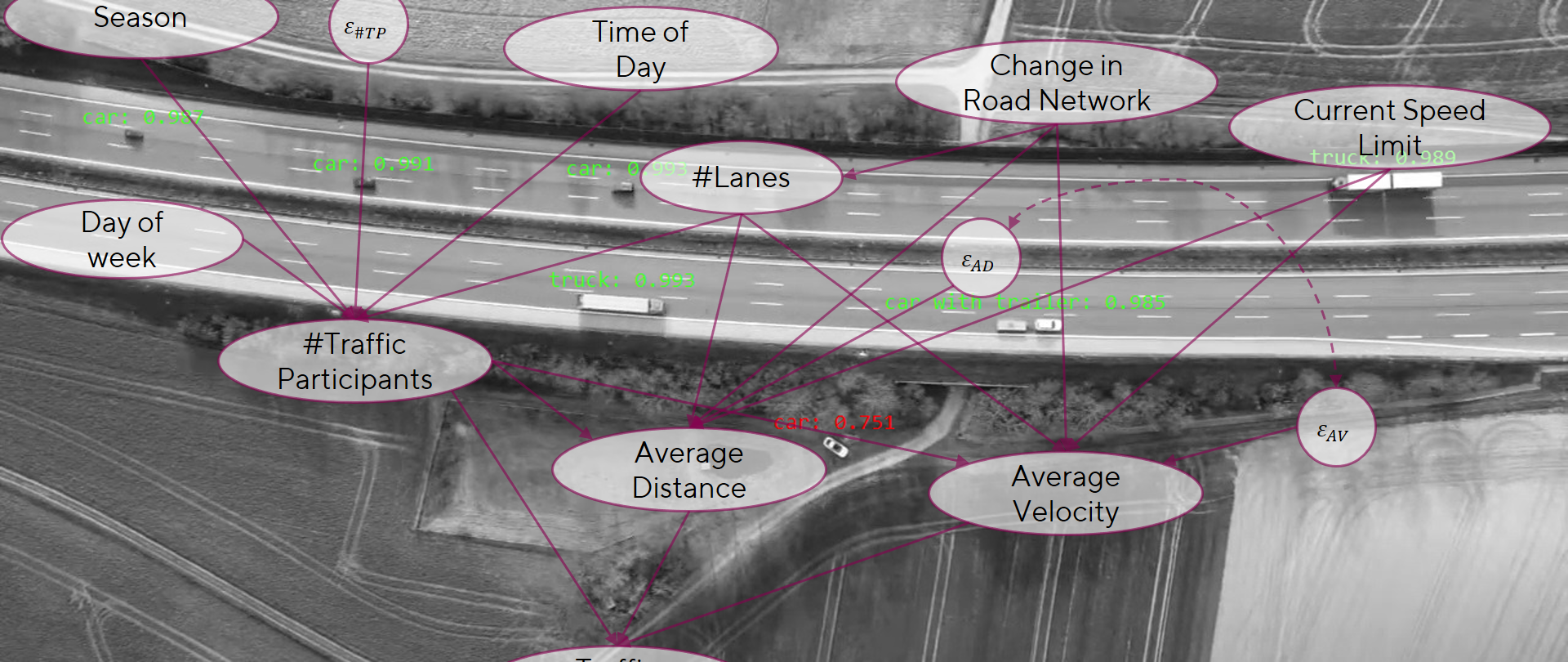

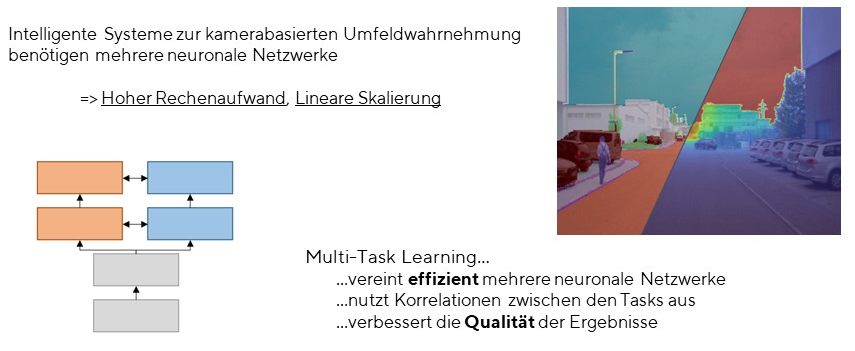

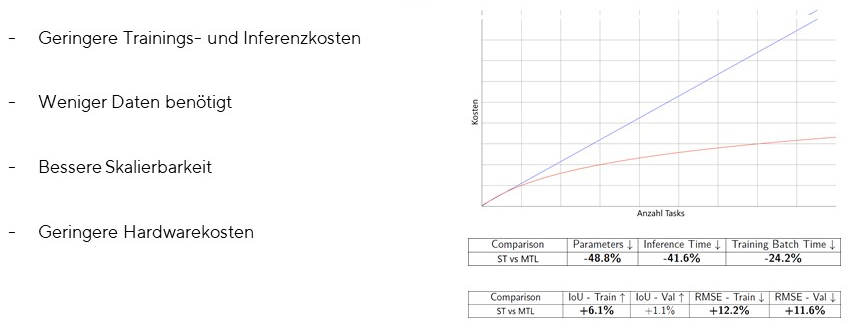

Multi-Task-Learning bietet die Möglichkeit mehrere Computervision Aufgaben simultan zu lösen und mehrere Systeme zur intelligenten Umfeldwahrnehmung zu vereinen. Der benötigte Rechenaufwand und die Qualität der Ergebnisse kann dadurch verbessert werden. Multi-Task-Learning ist ein integraler Bestandteil um Deep Learning basierende Systeme zur intelligenten Umfeldwahrnehmung auf heutiger Embedded Hardware effizient zu realisieren.

Ein Problem der auf Computer Vision basierenden Umweltwahrnehmung ist, dass klassische Algorithmen in ihrer precision, robustness und possibilities begrenzt sind. Aus diesem Grund versuchen neuere Ansätze, diese Bildverarbeitungsaufgaben mit Hilfe von convolutional neural networks oder anderen maschinellen Lernansätze zu lösen. Das Problem bei der Lösung dieser Aufgaben mit convolutional neural networks ist, dass sie in der Regel große Mengen an Rechenressourcen benötigen, aber nur eine einzige Aufgabe auf einmal lösen.

In einem komplexen System für die Umweltwahrnehmung, in dem mehrere Aufgaben gleichzeitig gelöst werden müssen, wären mehrere convolutional neural networks erforderlich, was die Rechenressourcen vervielfacht, so dass ein solches System für die meisten realen Anwendungen derzeit nicht in Frage kommt.

Um die erforderlichen Rechenressourcen zu verringern und die Ergebnisse und die Gesamtleistung zu verbessern, experimentieren immer mehr Forscher mit auf convolutional neural networks basierenden Multi-Task Learning.

Einsatzmöglichkeiten: Umfeldwahrnehmung für Fahrassistenzsysteme und autonome Fahrzeuge

Einsatzmöglichkeiten: Verbesserung der Qualität und Laufzeit von mehreren Computervision Aufgaben